Uses of OCR software

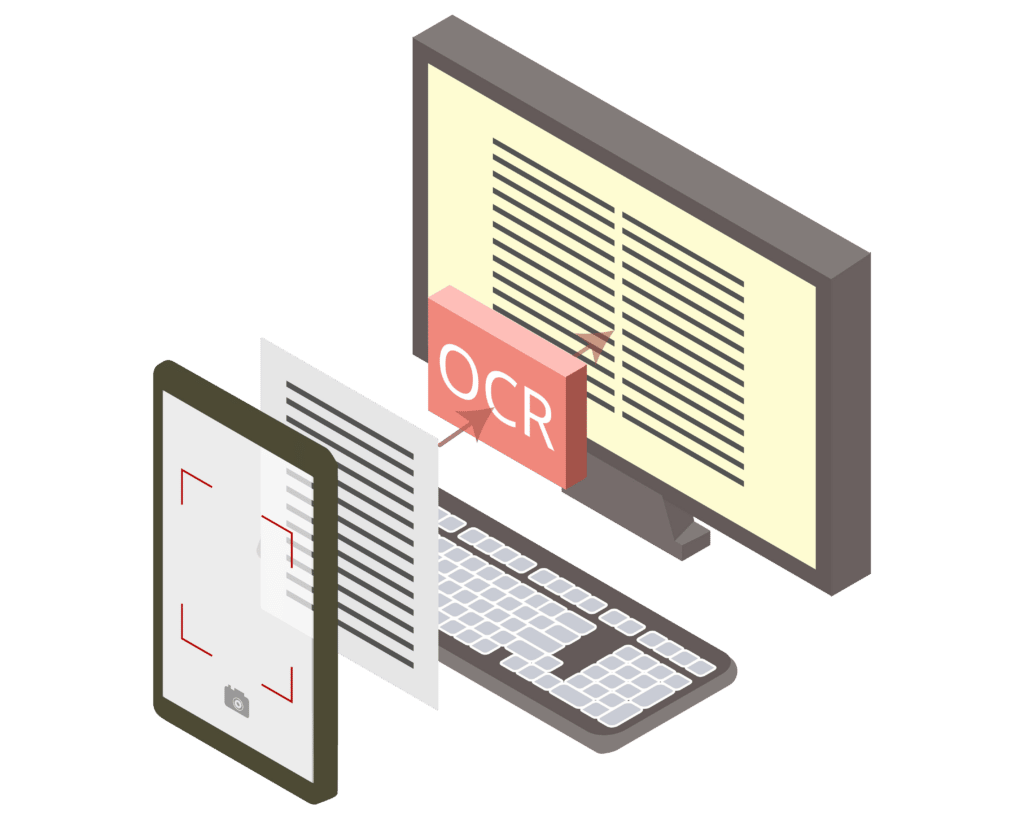

What began as a means to help the visually impaired has now become a staple of business process automation: OCR, short for “optical character recognition”, is a software technology that recognizes typewritten (or even handwritten) text on scanned document images and converts it into a machine-readable format. The results can then be processed further on a computer.

The ability to convert printed paper documents into machine-readable text has made it feasible to digitize entire libraries for future generations. In commercial use, it also facilitates data entry, machine translation, information mining, and many other processes across industries.

Banking and insurance are two sectors that benefit immensely from OCR technology, since they have traditionally relied heavily on paper-based documents. Application forms, customer records, money orders, receipts, banking statements, insurance certificates, … All of these are now being digitized, making their data readily accessible, reducing the number of printed documents, and minimizing errors caused by manual processing.

Optical character recognition vs. intelligent character recognition

OCR is often used as an umbrella term for different kinds of advanced methods of text recognition. However, there are some important differences between these approaches:

- Optical character recognition (OCR proper): Single typewritten characters

- Optical word recognition (OWR): Whole typewritten words

- Intelligent character recognition (ICR): Single typewritten or handwritten characters, utilizes machine learning

- Intelligent word recognition (IWR): Whole typewritten or handwritten words, utilizes machine learning

Initially, all OCR software was proprietary, but nowadays, open-source engines exist as well. Tesseract OCR is the most widely used and sits at more than 45,000 stars on GitHub. Version 4 introduced neural networks for faster and more accurate recognition, making the process even more seamless.

In fact, optical character recognition now works so well that it’s easy to forget just how many different steps are necessary to convert analog text into data. Let’s now take a look at some of them.

How optical character recognition software works

Before the process of character recognition even begins, the input document must be binarized, or in other words, turned into black and white. The goal is to achieve maximum contrast between the text and the background.

However, most scanned images also contain minor artifacts from dust or image compression. These need to be eliminated as much as possible by using an appropriate binarization threshold. If this threshold is too high, the characters themselves will lose their contours, but if it is too low, artifacts will remain in the image and interfere with the OCR process. A popular algorithm to find a suitable threshold is Otsu’s method.

After binarization, the OCR software draws outlines around the characters to create so-called connected components. These are organized into lines and then broken down into words by looking at the space in between. Individual characters, finally, are mapped to their digital equivalents using either matrix matching or feature extraction. Whereas the first approach involves comparison with a database of characters in different fonts, the latter takes topological features like open areas and line intersections into account. For example, an “H” always consists of two vertical lines intersected by a horizontal one more or less in the middle. Feature extraction thus works with a wider variety of fonts.

OCR post-processing

After transforming the text from an input image into raw data using an OCR engine like Tesseract, it can be processed further. Frequently, the aim is to output a file that represents the initial document as closely as possible. One option is to add the recognized text in an invisible layer on top of the input image in a PDF document. This way, the visual identity of the document stays intact – and the file is also searchable.

If the document needs to be edited afterward, a different approach is more suitable, e.g., creating a Word document that tries to mimic the formatting and layout of the input image.

Achieving optimal results with OCR

A clean input image is the most important factor influencing the quality of OCR output. If possible, use a flatbed scanner with a clean surface and scan the document at 300 dpi or higher. Another important factor is using a capable OCR software solution that applies image filters to the input before character recognition takes place.

Considering the quality of most cameras built into modern smartphones, even a photo of the document can be sufficient. With appropriate software, you can achieve mobile OCR results with 99% accuracy and above, which is necessary for automatic processing. Scanbot’s Data Capture SDK combines the high-performing Tesseract engine with extensive preprocessing features and deep learning algorithms to equip any smartphone or tablet with powerful OCR capabilities.

If you are interested in using optical character recognition to optimize your business processes, feel free to get in touch with our solution experts!